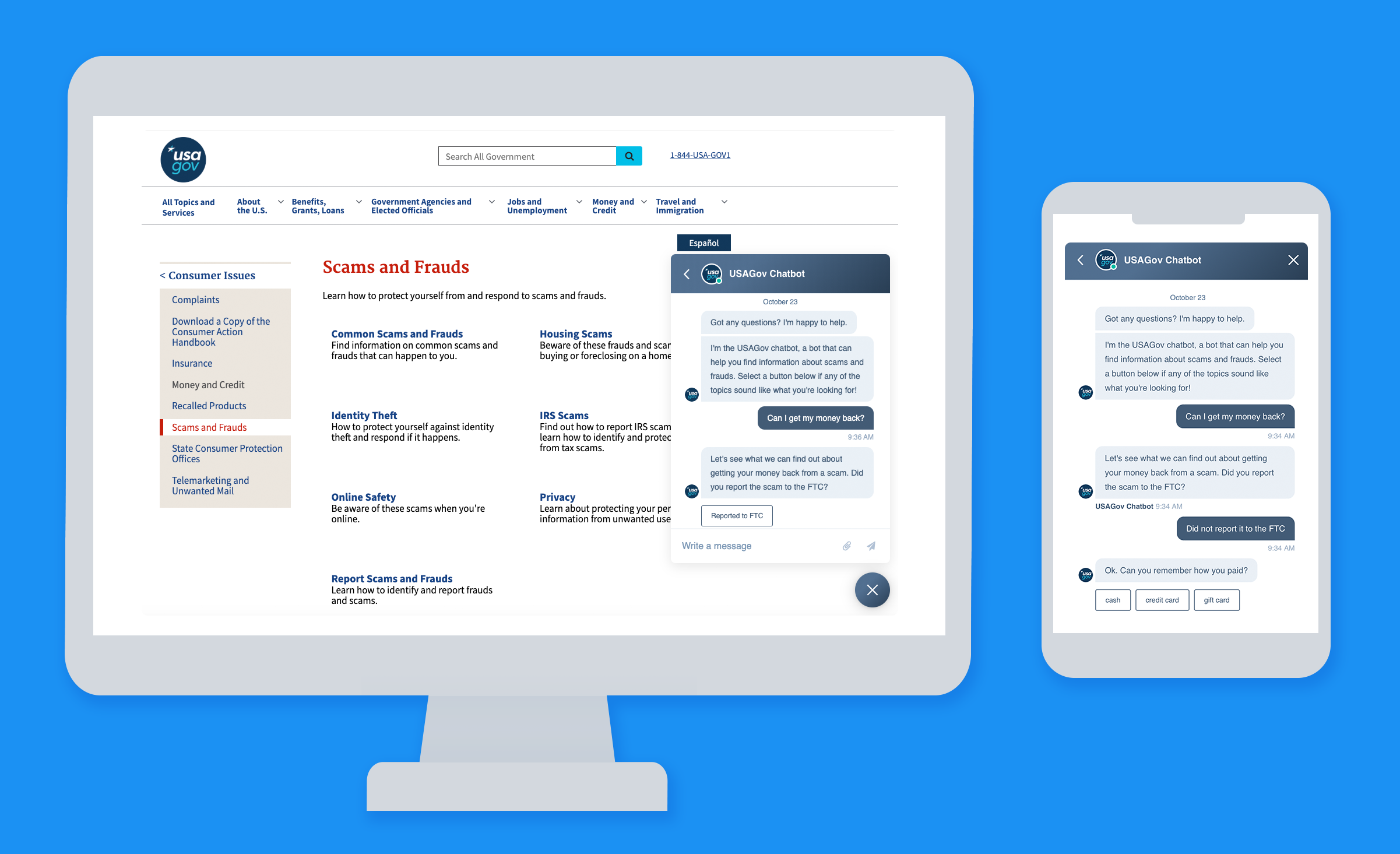

Earlier this year, we piloted our USAGov chatbot to help visitors find answers to their questions about scams and fraud. After the launch, we made tweaks to the interface based on customer feedback and usage data.

Curious to see how people were interacting with the technology, this summer, we spoke with a diverse group of nine English-speaking users and watched them try out the chatbot. They taught us a lot about our chatbot specifically, and about how people interact with AI in general.

Every single person had a scam story to tell.

- I bet you do too. Here’s my favorite quote about it: “Well, everybody tries to scam me. It’s called the internet.”

- Through the calls we get to our contact center and searches on our websites, we know how common scams are today. It really reinforces how important it is to give people an easy way to learn about and report scams.

People are familiar—and comfortable—with web chat.

- All of our participants had used web chat before. They see it as an increasingly common feature for interacting with retailers and service providers.

- People had mixed feelings about chatting with a bot and said they prefer to chat with a person. They’ve chatted with bots before, and sometimes the results have been good. But sometimes it’s been a waste of time. The outcome really depends on the complexity of the question, and the quality of the AI.

- People don’t mind interacting with a bot initially. In fact, they expect it. They also expect to have an easy way to talk to a person if the bot can’t handle their question.

People like web chat more than the phone for simple questions.

- Many said they actively avoid calling on the phone for help.

- They really dislike lengthy IVRs, those recordings you hear when calling for service (“press 1 for billing questions,” etc.).

- Waiting for a long time in a call queue is unpleasant, and based on their prior experiences, that’s what they expect.

- Having their call transferred from person to person and having to explain their situation over and over feels like a frustrating waste of time.

- A number of participants said that they just don’t like talking to agents on the phone.

But chat offers flexibility. They can multitask while getting an answer to their question.

The USAGov chatbot was very helpful for some tasks, less so for others.

- When they were given a clear, discrete task, like finding out where to report a scam, they found the bot very useful. In this case, it was a simple question and our bot returned a simple answer with a clear call to action.

- When they were given a more nuanced question, like how to determine if something was a real scam or just a rumor, the bot was less helpful. With AI, the quality of your service can only be as good as the information it has to work with.

Should the chatbot have a name?

Many chatbots have their own persona and a name. Ours does not; it’s simply the USAGov Chatbot. We’ve been toying with the idea of creating a chatbot persona, and people had mixed feelings about that idea.

Most people preferred the current name, “USAGov Chatbot” because:

- “USAGov Chatbot” clearly describes the service.

- A human name is misleading. It gives you the expectation that you’ll be chatting with a real person, and that’s not the case.

- Making it sound “cute” undermines the credibility and the authoritative nature of a government service. Remember, we’re talking about people who have just been scammed. They’re wary of being scammed again.

- If we wanted to give it a name, they suggested names like “Sam” (representing Uncle Sam), “George” (for George Washington), and “Rob” (short for Robot).

As always, user research was valuable. They gave us good ideas for simple and immediate improvements, like making our chatbot responses more actionable, and adding tooltips to our “start a new chat” icon. We also have things we can consider as we plan for our AI future.

.png)